Sign Manifesto

10

85

500

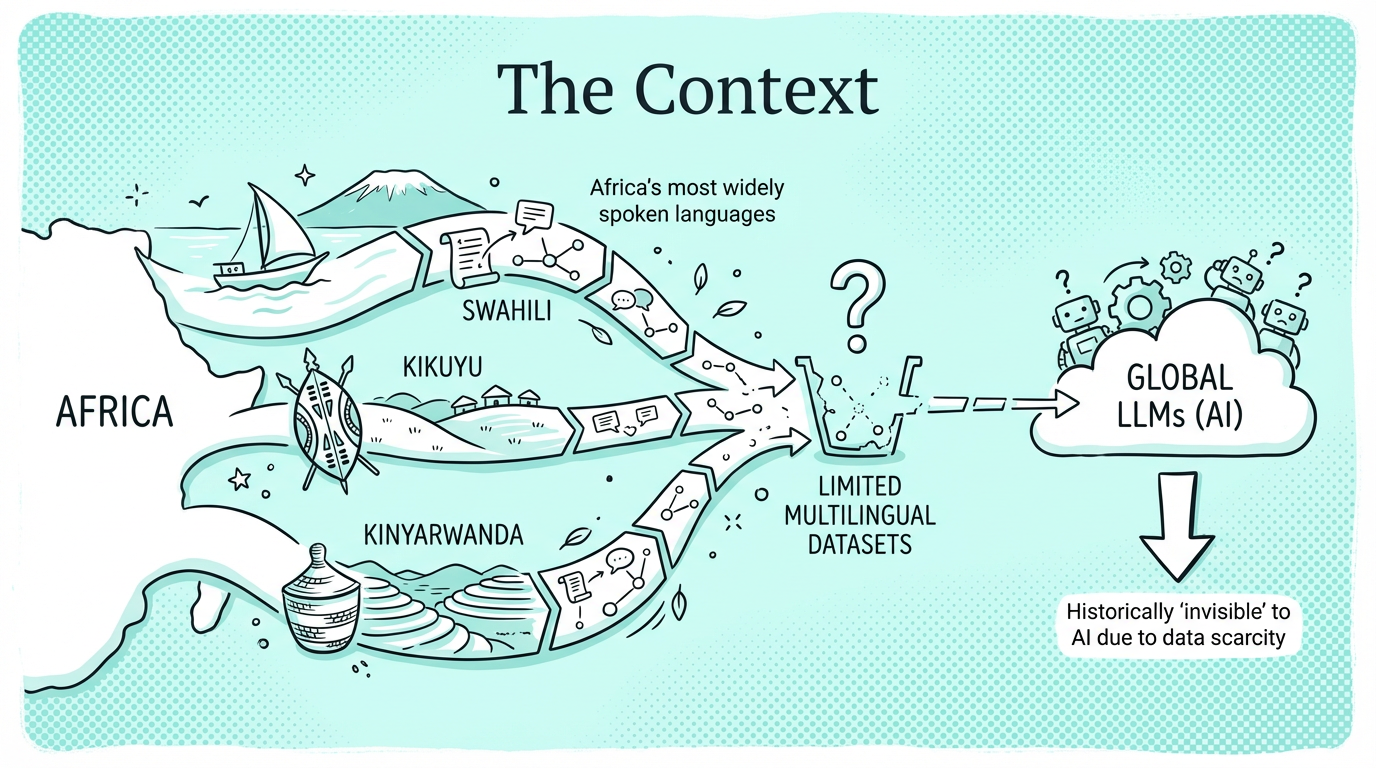

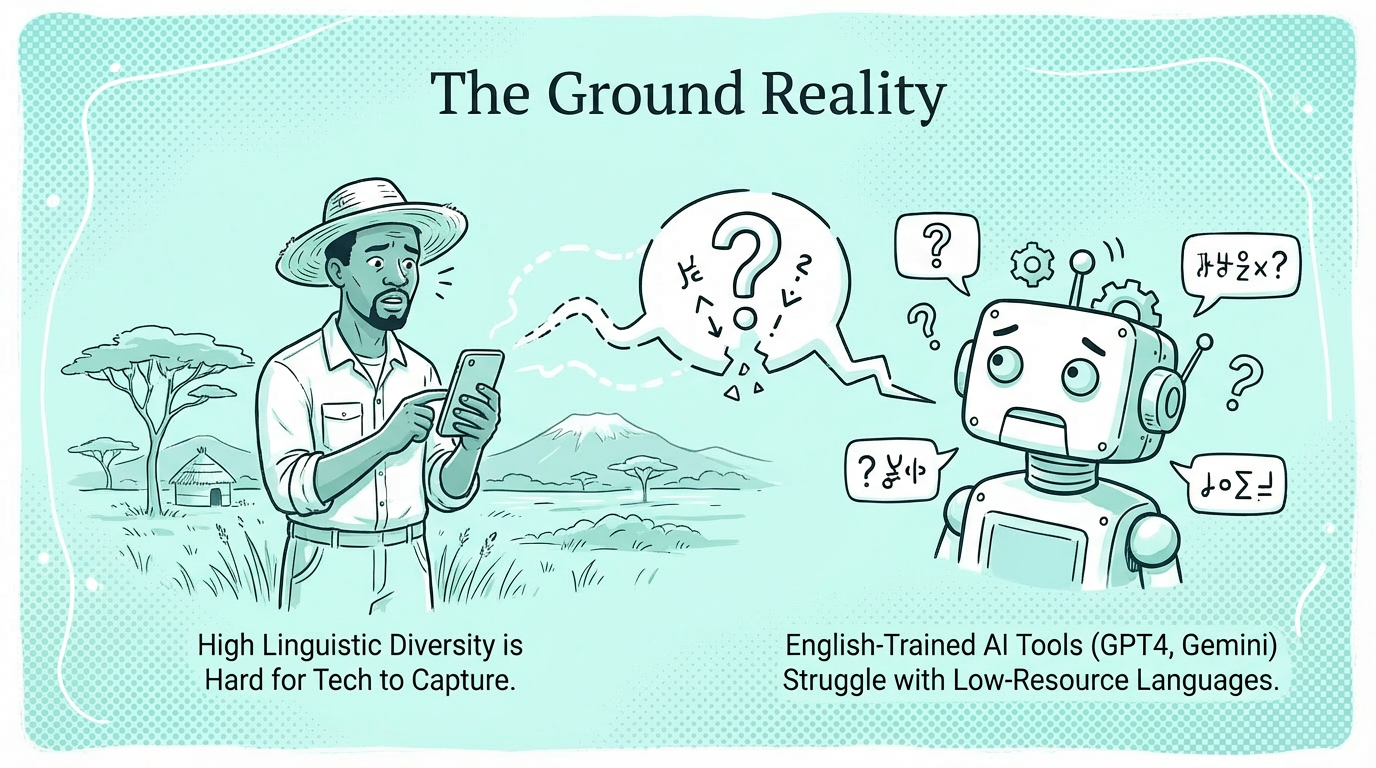

Low-Resource Language Evaluations

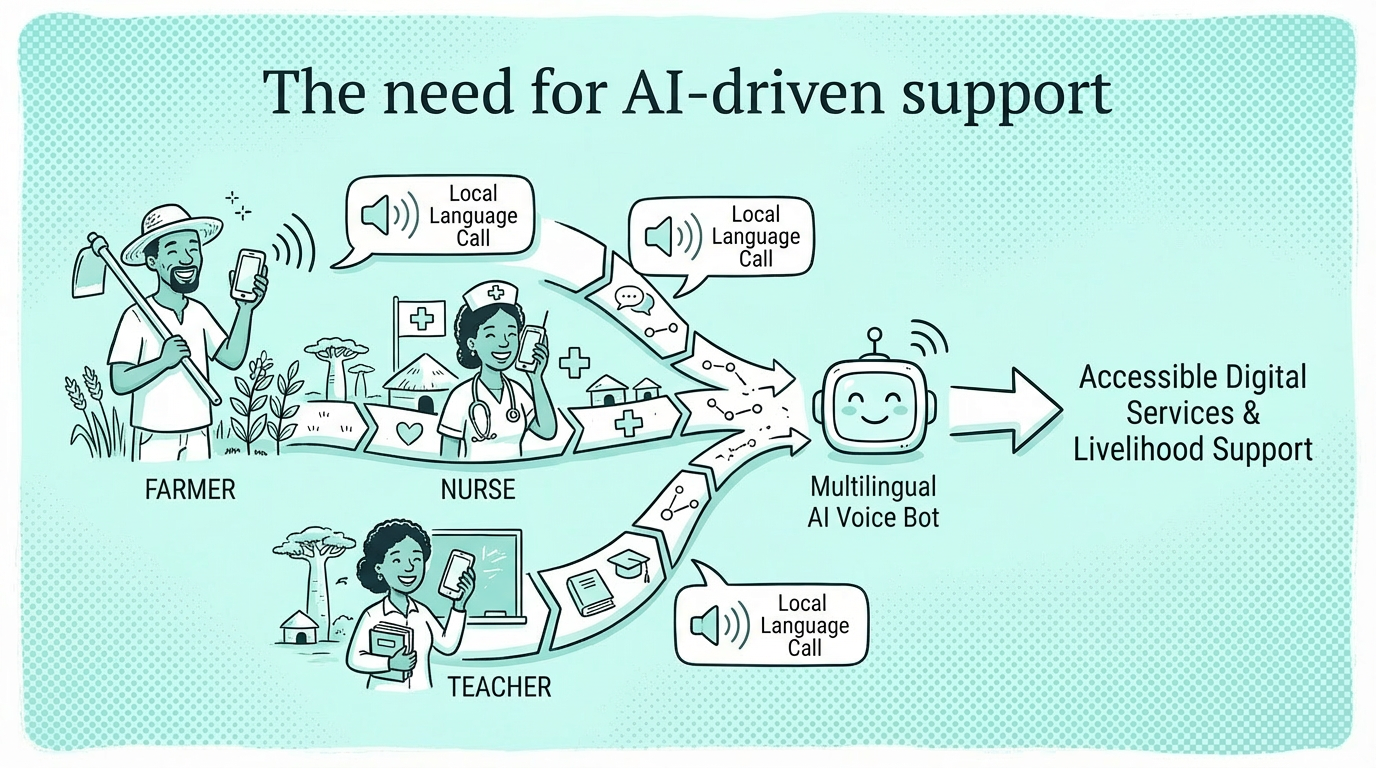

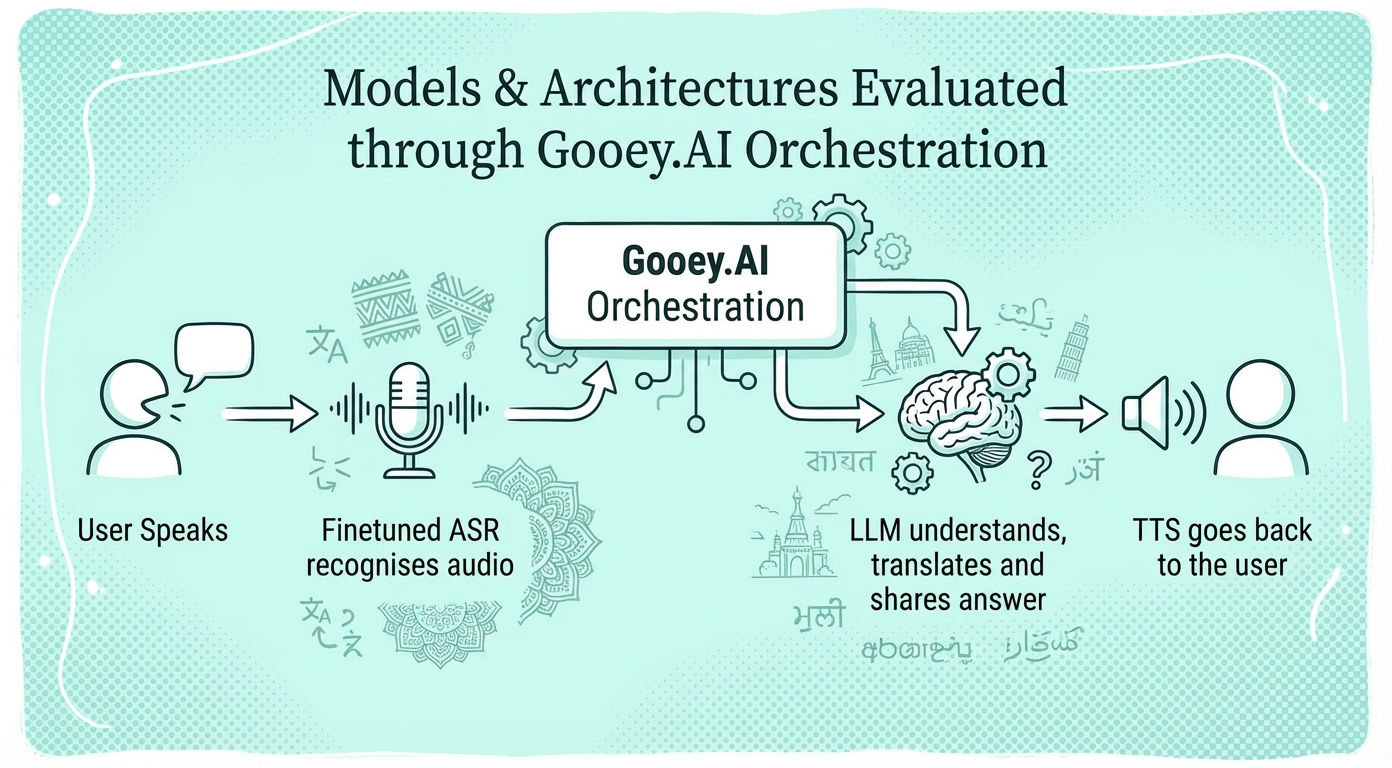

Our goal is to highlight real-world use cases of common health, agriculture and education audio questions and determine how well and how quickly different AI model combinations respond to these questions (as determined by their semantic similarity to expert provided "golden" answers).

Every evaluation, prompt, model settings and AI workflows are public and immediately forkable for organizations to test AI any workflow with your own data and use case. Organizations are encouraged to create audio evaluations with their own data too.

10

85

500

Pakistani and Nigerian Health

In April 2026, we evaluated the latest and best-performing private (Gemini 3.1, OpenAI 5.4, Intron) and open source/sovereign deployable (Omnilingual, Gemma 4 26B, Kimi2.5) models for their performance on health-related audio questions in the languages commonly found in Pakistan and Nigeria. In our test, Gemini 3.1 Pro as the LLM + Intron or Meta's Omnilingual as the speech recognition model (ASR) scored most accurate, making the combination appropriate for WhatsApp and other async messaging based deployments, though likely too slow for usable voice-only services.

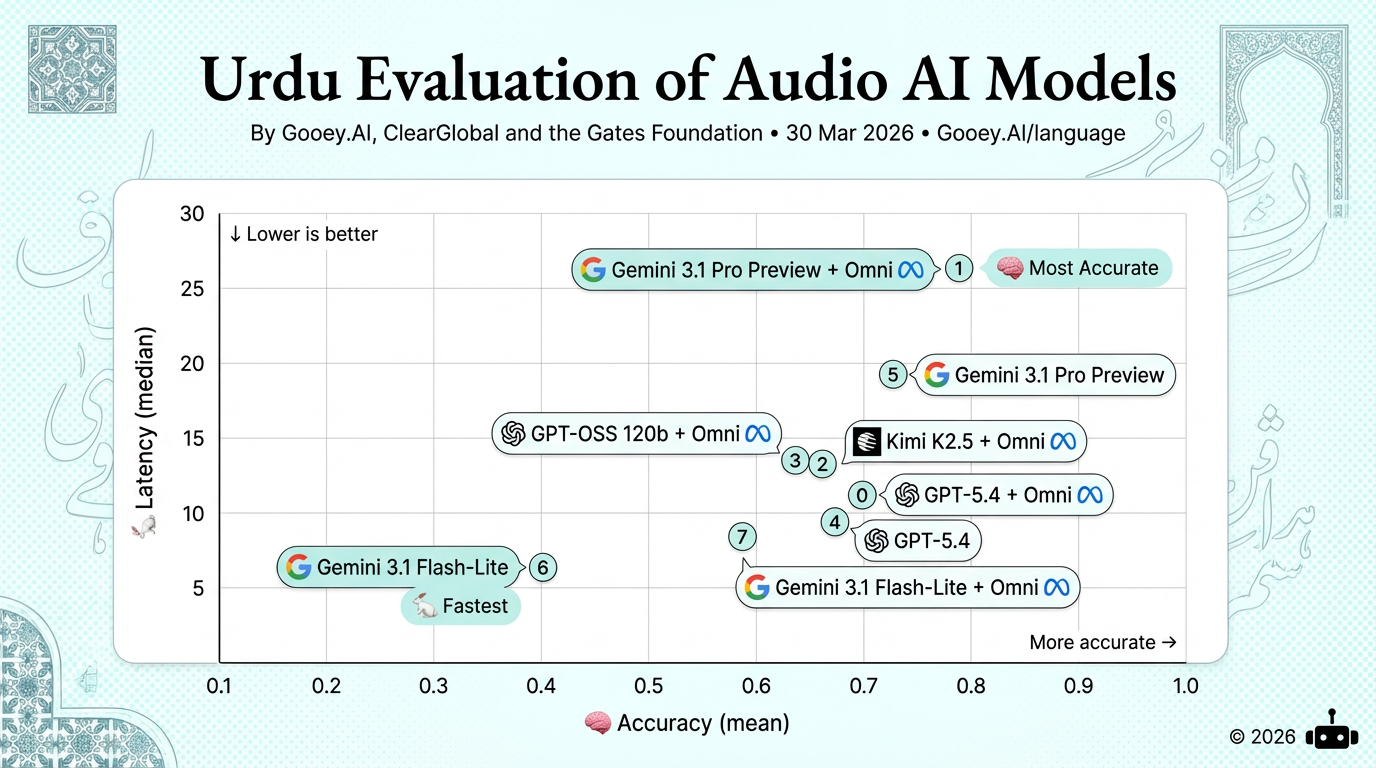

Pakistani Languages

For Urdu, Gemini 3.1 Pro Preview + Omni ranks highest on accuracy but carries ~27s median latency, making it best for async use cases like WhatsApp voice messages. For real-time voice applications, GPT-5.4 + Omni offers the strongest accuracy-speed balance. Gemini 3.1 Flash-Lite is fastest but trades off significant accuracy.

For Pashto, Gemini 3.1 Pro Preview + Omni leads on accuracy (0.66) but at ~15s latency, suiting async use. GPT-5.4 + Omni offers the best speed-accuracy balance (0.65, 8s) for real-time voice. Gemini Flash-Lite is fastest but scores near zero on accuracy.

For Sindhi, Gemini 3.1 Pro Preview is most accurate but high latency (~16s), best for async applications. GPT-5.4 + Omni and Gemini Flash-Lite + Omni cluster well on accuracy (~0.65) with moderate latency, making either viable for real-time voice deployments.

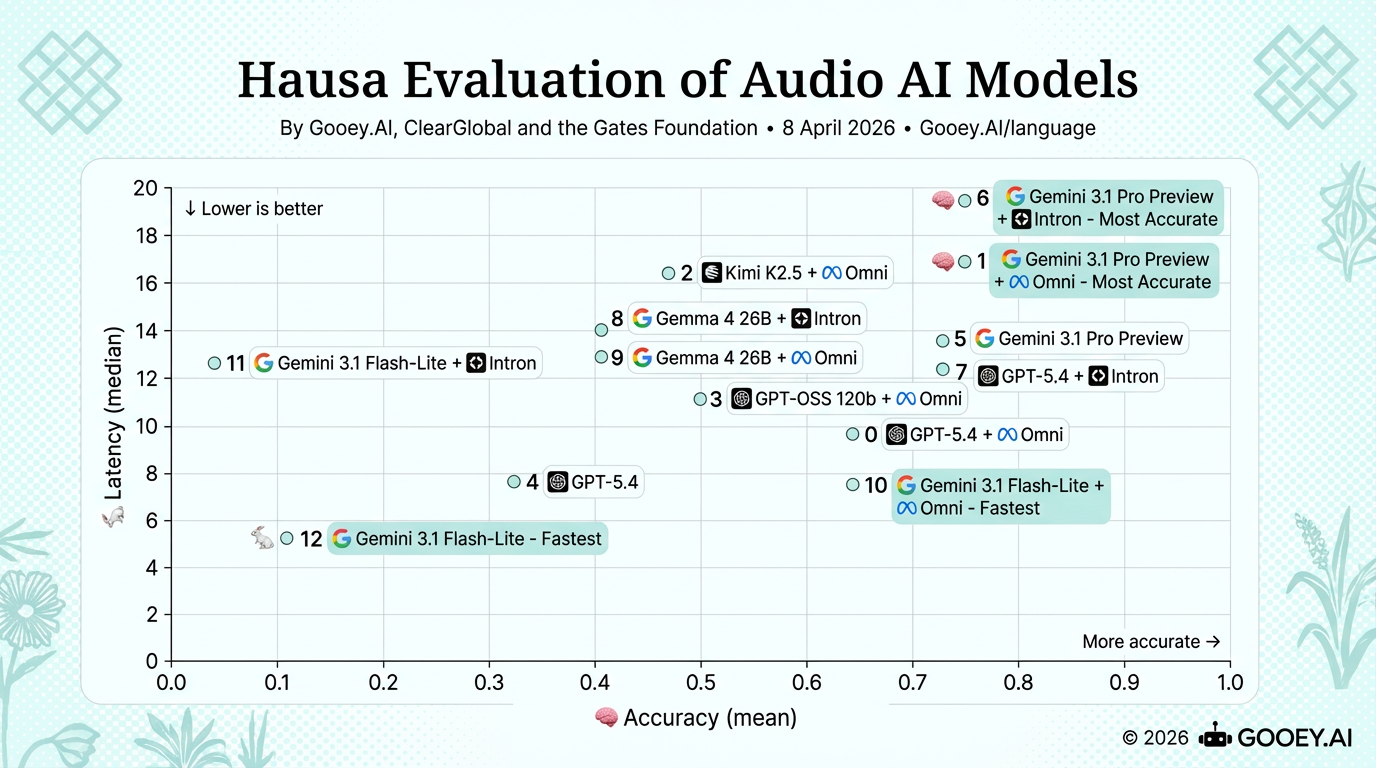

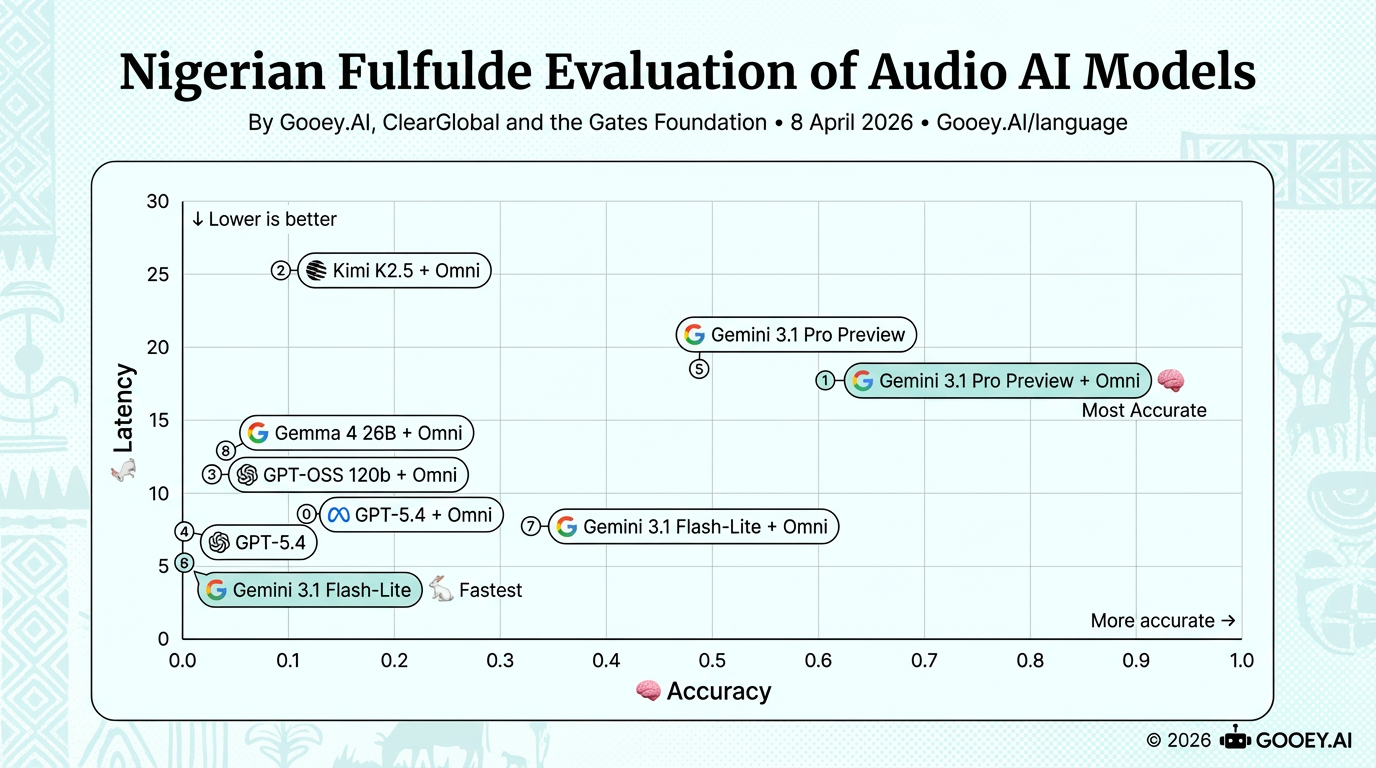

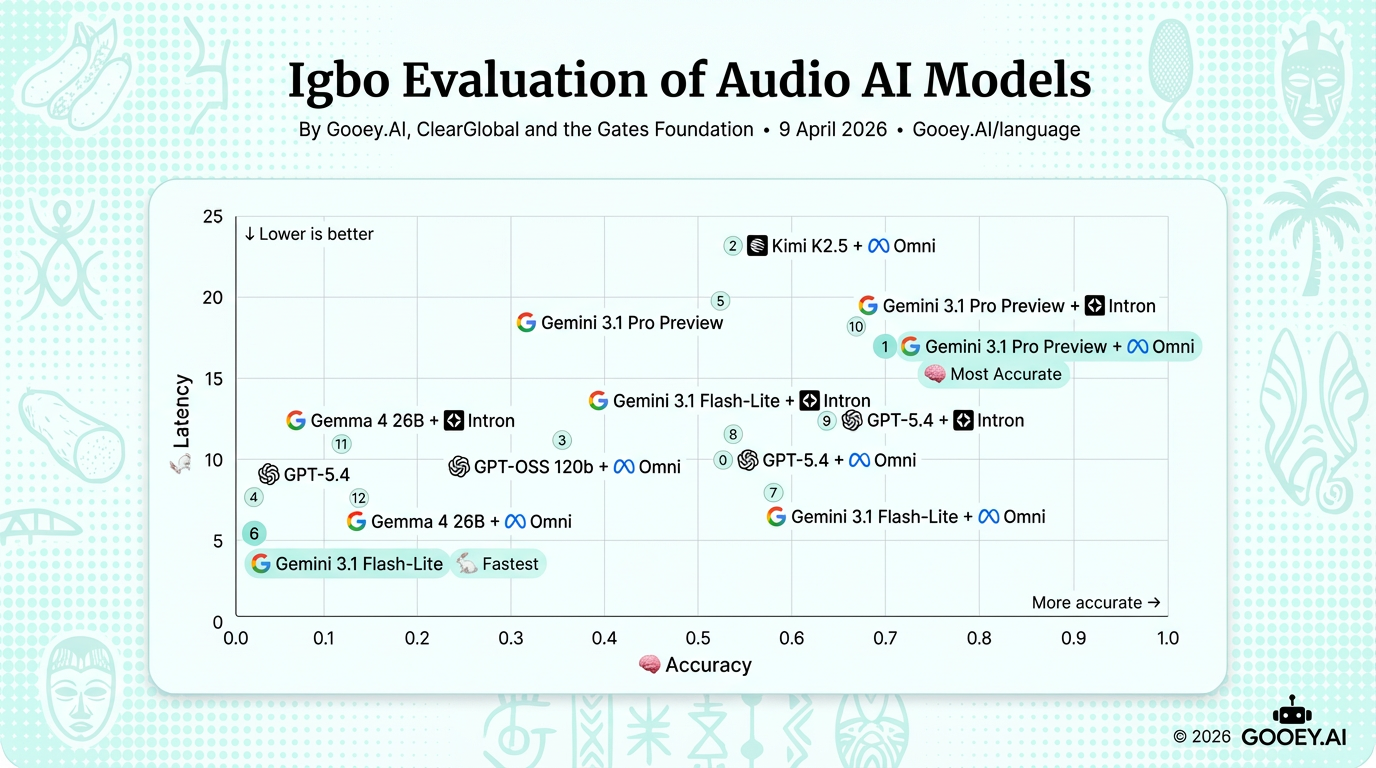

Nigerian Languages

For Hausa, Gemini 3.1 Pro Preview + Intron & Gemini 3.1 Pro Preview + Omni rank equally for highest accuracy (~0.75), though they slightly differ in speed - Omni (~17s)/Intron (~19.5s), making Gemini 3.1 Pro Preview + Omni best suited for async use. For real-time voice, Gemini 3.1 Flash-Lite + Omni offers the best balance (~0.65 at ~8s). Gemini 3.1 Flash-Lite is fastest (~5s) but trades off significant accuracy at ~0.1. Notably, Omni pairings consistently deliver the highest accuracy gains, albeit with increased latency in most cases.

For Nigerian Fulfulde, Gemini 3.1 Pro Preview + Omni is the most accurate (~0.6) at ~18s latency, and Gemini 3.1 Flash-Lite is the fastest (~5s) but with negligible accuracy, while Gemini 3.1 Flash-Lite + Omni provides a reasonable speed–accuracy balance (~0.3 at ~8s), though overall accuracy remains low across models. Notably, Nigerian Fulfulde shows a wider performance gap, with most models clustering at very low accuracy except the top Gemini stack.

For Igbo, Gemini 3.1 Pro Preview + Omni ranks highest on accuracy (~0.7) at ~17s latency, making it best suited for async use cases. For real-time voice, Gemini 3.1 Flash-Lite + Omni offers the strongest speed–accuracy balance (~0.6 at ~8s). Gemini 3.1 Flash-Lite is the fastest (~6s) but with near zero accuracy. Notably, Omni and Intron pairings consistently improve accuracy across models, with a latency tradeoff.

For Yoruba, Gemini 3.1 Pro Preview + Omni ranks most accurate (~0.85) but at ~17s latency. GPT-5.4 + Omni gives strong accuracy (~0.55) at ~8s, best for real-time voice. Notably, Intron Voice API pairings consistently add accuracy but increase latency significantly.

For Pidgin, Gemini 3.1 Pro Preview + Intron Voice API tops accuracy but at ~18s latency. GPT-5.4 is both fastest and highly accurate (~0.7), making it the standout choice for real-time voice. The Intron Voice API integration notably boosts accuracy across models.

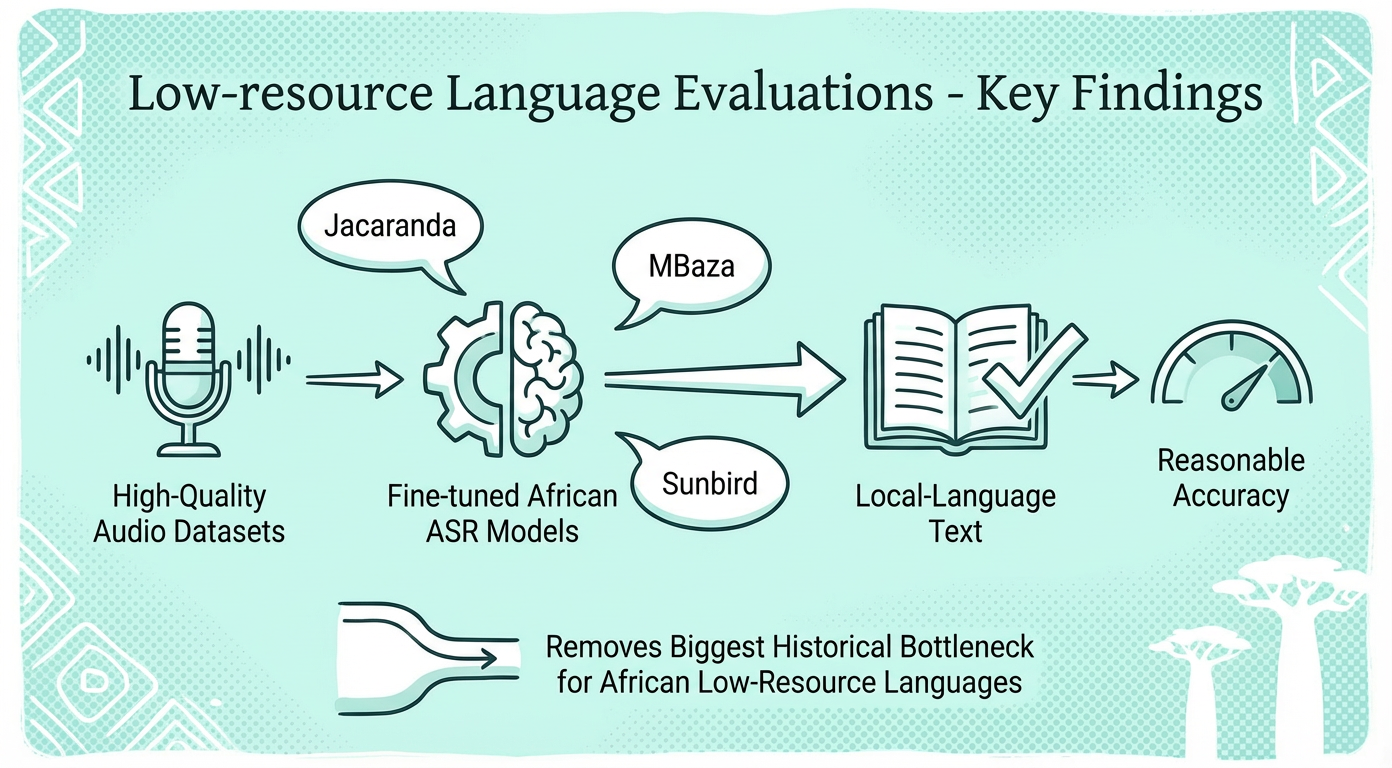

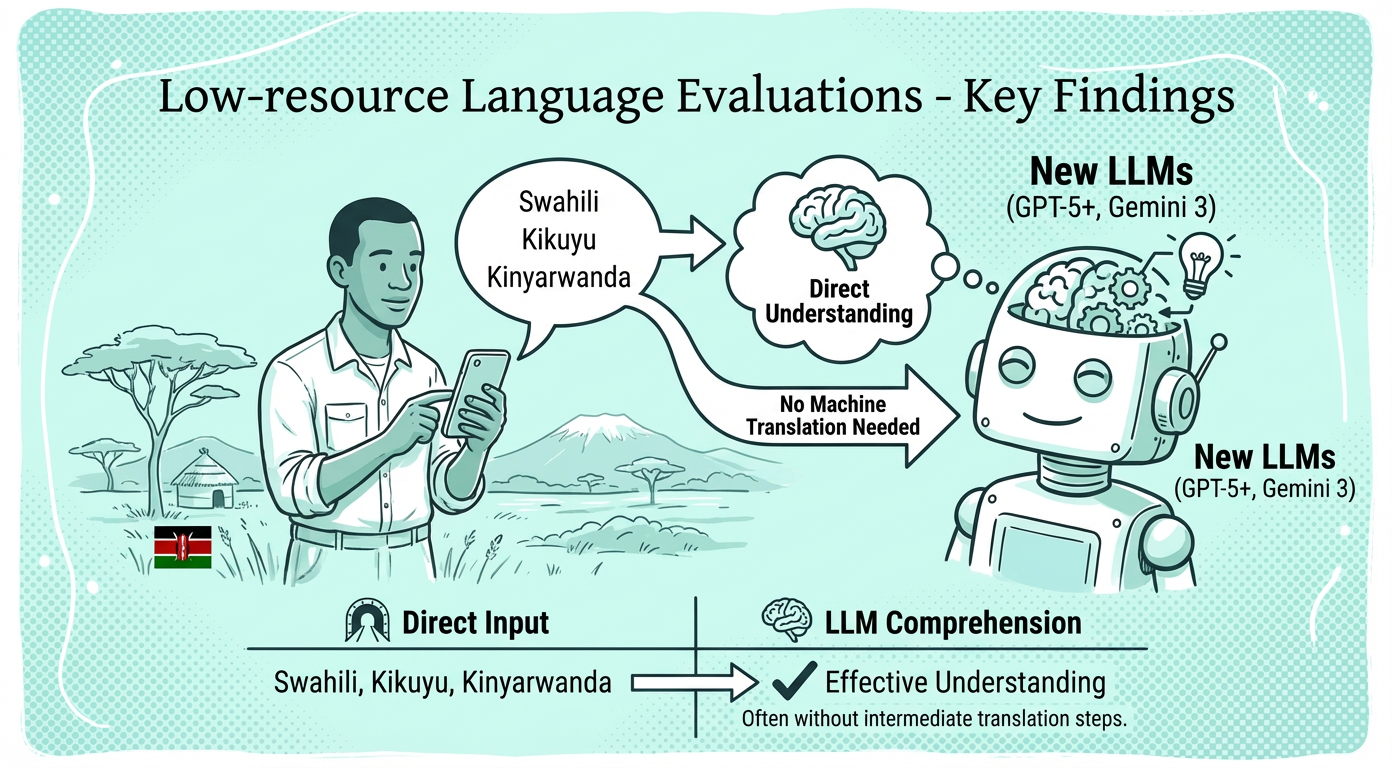

Swahili, Kinyarwanda and Kikuyu

In December, 2025 We tested new LLM+ASR AI workflows to understand real-world deployment Swahili, Kikuyu, and Kinyarwanda voice applications. Fine-tuned African ASR models + modern LLMs (GPT-5.1, Gemini 3) achieved 85-96% accuracy with 4-10 second response times—fast enough for phone-based services. In short, Voice AI for many African languages is now technically viable for WhatsApp and basic phone deployments at scale.

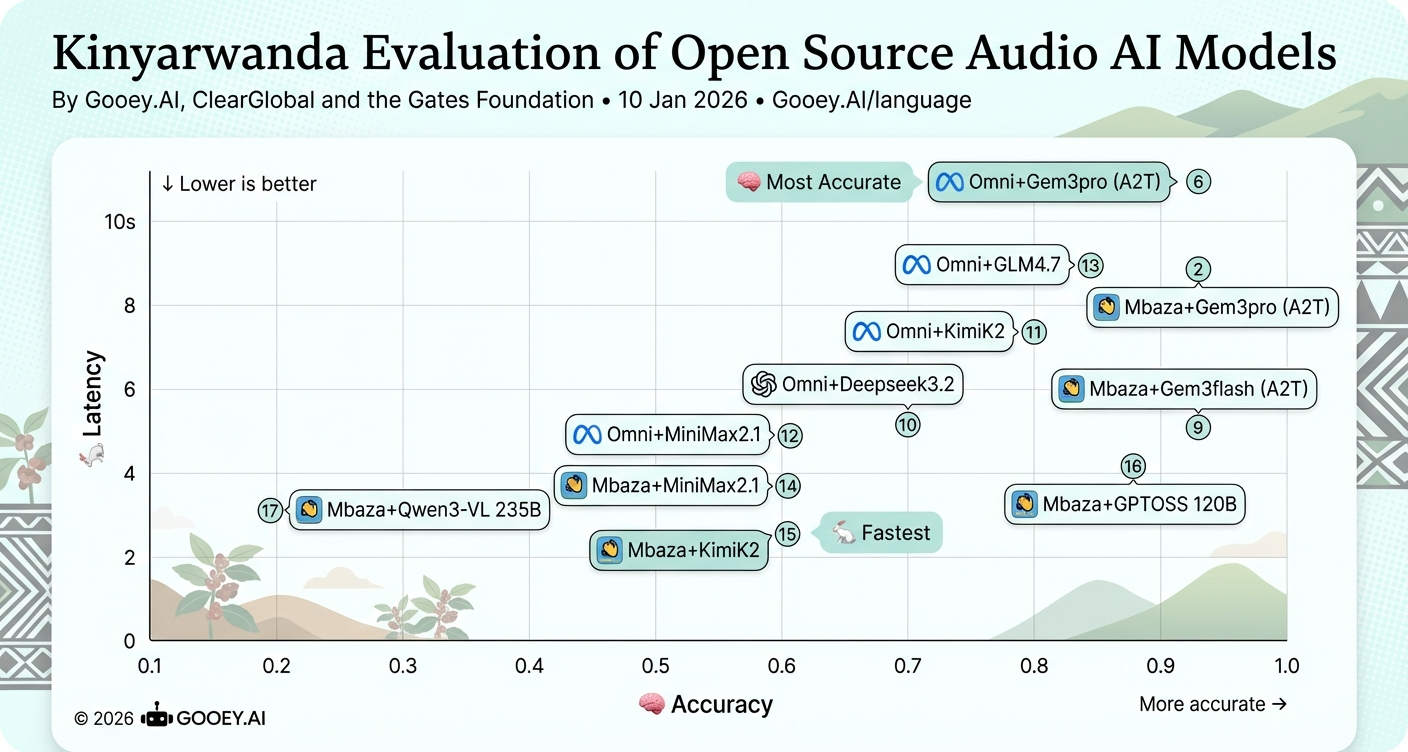

Read the PaperWith Kinyarwanda, we found newer open-source models (KimiK2, GLM4.7, MiniMax2.1, DeepSeek3.2) offer a clear accuracy uplift over Qwen3. For phone-based voice apps, MBaza combined with GPTOSS provides the best speed/accuracy tradeoff (~0.88 accuracy at ~4s latency) and is likely most appropriate. For asynchronous use (WhatsApp voice), higher-accuracy but slower stacks such as MBaza+Gem3pro yield more accurate answers despite increased latency for constrained networks and basic phones today.

With Swahili, we found that the OpenAI realtime models tended to perform poorly on both accuracy and latency, with the fine-tuned Jacaranda Health model in combination with GPT 4.1 and 5.1 offering a reasonable combination of both speed and accuracy and hence, likely most appropriate for voice applications. For async applications like WhatsApp voice messages, Jacaranda plus Gemini 3 Pro gave the most accurate answers.

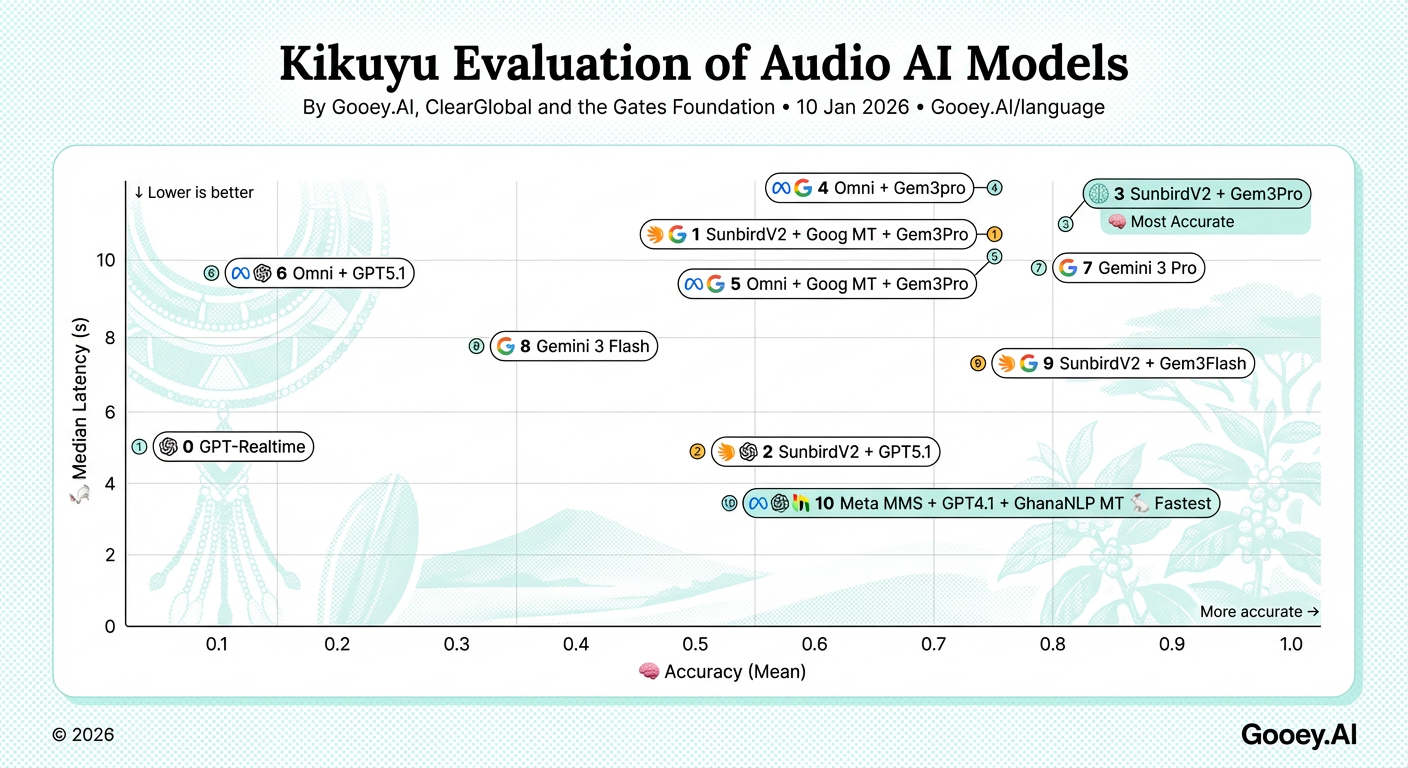

In general, the top performing models in Kikuyu collectively perform worse that Swahili and Kinyarwanda. Additionally, as of Dec 2025, our tested machine translation models between English and Kikuyu were limited to just GhanaNLP’s. Nonetheless, SunbirdV2 + Gemini 3 (both Pro and Flash) offer reasonable accuracy with median latency times of 7 and 10 seconds respectively. These offer latency times low enough for async voice messaging but are likely still too slow for usable voice based feature phone applications.

Theory of Change

New LLM generations (GPT-5+, Gemini 3) dramatically improved multilingual text comprehension of local-language text.

.png)

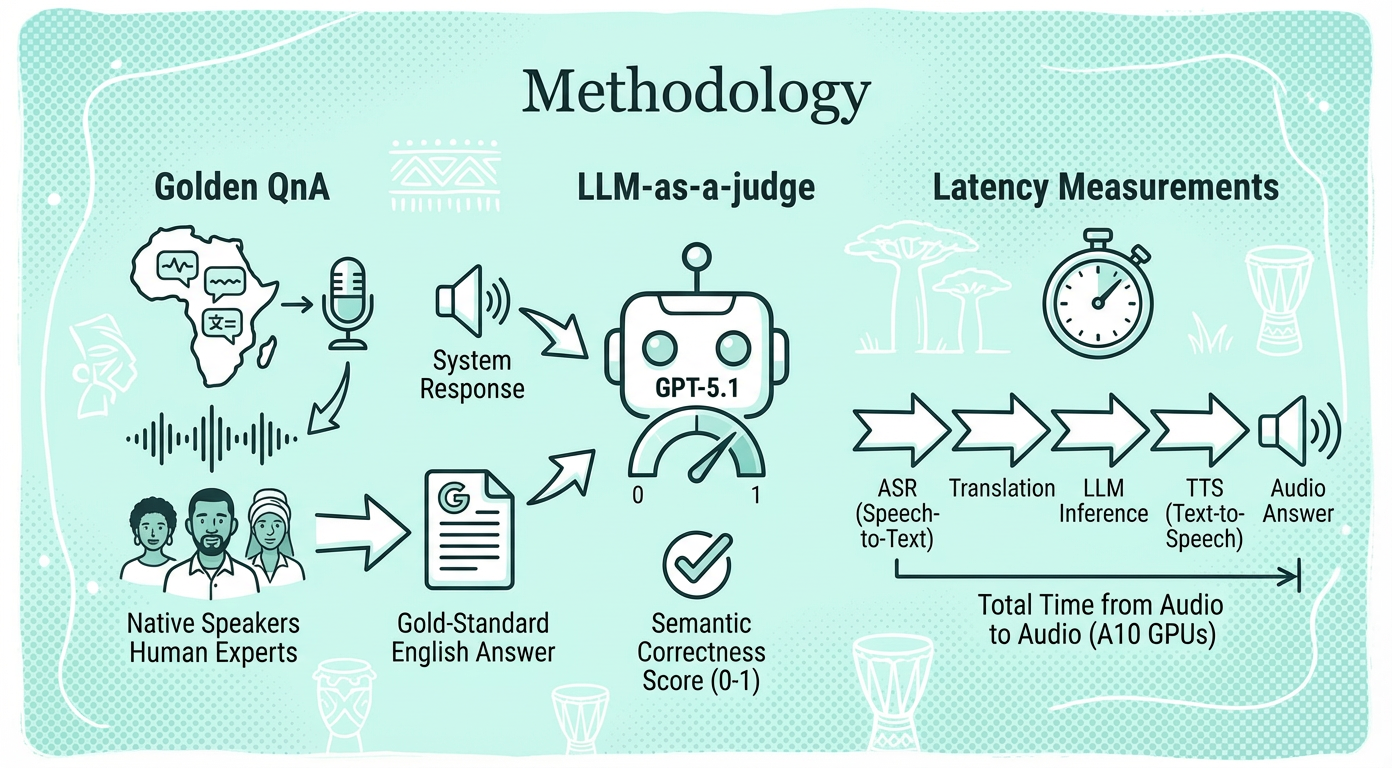

Native speakers record audio questions in three African languages; human experts provide gold-standard English answers for consistent evaluation across systems.

LLM-as-a-judge

GPT-5.1 scores system responses against expert answers on 0-1 scale, measuring semantic correctness since African language evaluation remains unreliable.

Latency measurements

Measures total time from audio question to audio answer, including ASR, translation, LLM inference, and TTS on A10 GPUs.

.png)